Best SSDs 2023: The top NVMe and SATA drives around

The best solid-state drives to meet your needs, whether that's speed, reliability, or value for money

If your computer is feeling slow, the cause might not be your CPU or memory – it could just as easily be your storage.

A slow hard disk can have a huge impact on the responsiveness of your PC, particularly when it comes to starting up Windows, opening apps and working with large datasets. Upgrading to a high-speed SSD can make a sluggish desktop fly.

Best business laptops 2023: Top business notebooks from Acer, Asus, Dell, Apple and more Best NAS drives 2023: Which network storage appliance is right for you? Best printers 2021: For all your printing, scanning and copying needs

When choosing your drive, however, raw speed isn't the only factor you should take into account. Reliability is a hugely important issue, especially for business use. SSDs have a finite lifespan, and they’re only guaranteed to sustain a certain number of write and erase cycles. Enterprise drives are typically designed to withstand much more intensive use than consumer models, and it’s crucial to make sure the drives in your machines are up to your workloads.

Remember, though: even if your chosen SSD is designed for heavy usage, unexpected hardware failures do happen, and your warranty won’t bring back any data that gets lost. It remains absolutely essential to keep regular backups.

Another factor to consider when picking an SSD is security. Some drives feature built-in encryption, which provides a welcome layer of protection for those working on sensitive or confidential information.

Finally, there’s the question of price. Larger capacities naturally cost more, but you don’t necessarily need to buy a huge drive: it may make sense to choose a modestly-sized SSD for your operating system and applications, and partner it with a much cheaper mechanical disk for data that doesn’t need high-speed access.

Whether you're building a custom system from scratch or upgrading an existing machine, there are plenty of SSD options to choose from: here’s a selection of our favourites.

WD Black SN850

Upgrading or building a PC? Then the WD Black SN850 makes a superb system drive. It takes full advantage of the huge bandwidth provided by the PCI Express Gen4 bus to offer performance most rival SSDs can’t match. It also boasts multi-thread performance and, despite all its positive aspects, is fairly affordable too. It’ll set you back around £140 but be aware its phenomenal performance is enabled by the PCI Express Gen4 interface. If you install it in an older PC, it will work but won’t be as fast.

| Capacity | 1TB |

| Cost per GB | 18p |

| Interface | PCIe 4 |

| Claimed read | 2,400MB/sec |

| Claimed write | 1,950MB/sec |

Price when reviewed: £140 exc VAT

Read our full WD Black SN850 review here

Samsung 870 QVO

The Samsung 870 QVO is almost a carbon copy of the 870 Evo, as it’s effectively the same drive but with four-bit QLC chips in place of the more mainstream TLC tech. It has the additional memory without sacrificing speed and is one of the speediest SATA drives we’ve seen. It’s also cheaper than its TLC counterpart, at £129 for 2TB.

Unfortunately, due to the limited write endurance of the 870 QVO means it won’t be the best for intensive, mission-critical roles. However, if you want to give a new lease of life to a laptop or lightweight PC, it can provide the space you need at an unbeatable price.

| Capacity | 2TB |

| Cost per GB | 8p |

| Interface | SATA 6Gbits/sec |

| Claimed read | 560MB/sec |

| Claimed write | 530MB/sec |

Price when reviewed: £129 exc VAT

Read our full Samsung 870 QVO review here

Samsung 980 Pro

The Samsung 980 Pro delivers super-fast file transfers once plugged into a PC or laptop with a PCI Express Gen3 motherboard. On systems with the Gen4 bus, the drive can go even faster, reaching an incredible 5,468MB/sec. With speeds like this, the high-end drive doesn’t come cheap. The entry-level 250GB model costs around £59 exc VAT, with 500GB, 1TB, and 2TB variants progressively increasing in price to a steep £288 exc VAT. If you need top performance and hardware encryption, then this component delivers fully on both.

| Capacity | 1TB |

| Cost per GB | 19p |

| Interface | NVMe |

| Claimed read | 7,000MB/sec |

| Claimed write | 5,000MB/sec |

Price when reviewed: £154 exc VAT

Read our full Samsung 980 Pro review here

Samsung 870 EVO

The Samsung 870 Evo doesn’t really have anything distinctive about it but equally it doesn’t get anything wrong either. With sequential read and write rates of 528MB/sec and 499MB/sec respectively, it’s as fast as any other SATA drive you can buy. Additionally, in the PCMark 10 storage benchmarks it achieved fairly good scores, racking up 1,178 in the System Disk test and 1,473 in the Data Disk stakes. By offering a five-year warranty and a promised write endurance of 600TBW for the 1TB model, it ticks all the right boxes. If you need to upgrade an old PC or laptop then this drive will get the job done.

| Capacity | 500GB |

| Cost per GB | 11p |

| Interface | SATA 6Gbits/sec |

| Claimed read | 560MB/sec |

| Claimed write | 530MB/sec |

Price when reviewed: £56 exc VAT

Read our full Samsung 870 EVO review here

Crucial P5 Plus 1TB

If you’re looking for a fast device for writing that outperforms the PC Mark 10 storage benchmarks, then look no further. The Crucial P5 Plus is a solid PCIe NVMe drive and a great value option that will supercharge your storage performance. It is a notable speed upgrade over PCI Express 3.0 P5 with great value relative to its competition, priced at £131 excluding VAT.

| Capacity | 2TB |

| Cost per GB | 15.7p |

| Interface | NVMe |

| Claimed read | 6,600MB/sec |

| Claimed write | 5,000MB/sec |

Price when reviewed: £131 exc VAT

Read our full Crucial Plus 1TB review here

WD Blue SN550

If you're building a relatively simple PC or wanting to upgrade from your SATA storage on the cheap, this might be the drive for you. This is a budget SSD that is probably going to mainly face relatively simple tasks. It has fast maximum speeds, only cost £83 when we reviewed it and is one of our recommended drives. It has a few advantages over the SN500 too like faster overall.

| Capacity | 1TB |

| Cost per GB | 10p |

| Interface | NVMe |

| Claimed read | 2,400MB/sec |

| Claimed write | 1,950MB/sec |

Price when reviewed: £83 exc VAT

Read our full WD Blue SN550 review here

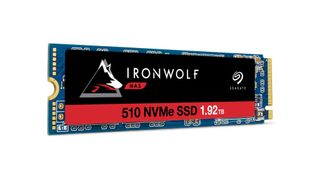

Seagate IronWolf 510

If you regularly access large amounts of data, the Seagate Ironwolf 510 will have the performance and life expectancy you'll need, whether your handling video, images or big data. It is a high capacity device with extended durability and with is commensurate £360 price, it may be overkill for file server caching in your average office environment.

| Capacity | 1.92TB |

| Cost per GB | 20p |

| Interface | NVMe |

| Claimed read | 3,150MB/sec |

| Claimed write | 850MB/sec |

Price when reviewed: £360 exc VAT

Read our full Seagate IronWolf 510 review here

Get the ITPro. daily newsletter

Receive our latest news, industry updates, featured resources and more. Sign up today to receive our FREE report on AI cyber crime & security - newly updated for 2024.

Adam Shepherd has been a technology journalist since 2015, covering everything from cloud storage and security, to smartphones and servers. Over the course of his career, he’s seen the spread of 5G, the growing ubiquity of wireless devices, and the start of the connected revolution. He’s also been to more trade shows and technology conferences than he cares to count.

Adam is an avid follower of the latest hardware innovations, and he is never happier than when tinkering with complex network configurations, or exploring a new Linux distro. He was also previously a co-host on the ITPro Podcast, where he was often found ranting about his love of strange gadgets, his disdain for Windows Mobile, and everything in between.

You can find Adam tweeting about enterprise technology (or more often bad jokes) @AdamShepherUK.