Best data recovery tools 2021: Restore your lost files

Deleted partitions and corrupted disks don’t need to mean irretrievable folders

As the famous quote says, three things in life are certain: death, taxes and lost data. It's happened to us all at least once: we fire up a laptop or plug in a hard drive and learn it’s corrupted and the data within is inaccessible. There's that immediate sinking feeling as you recall all the important files you forgot to back up that may be lost forever.

Fortunately, you have a number of data-recovery remedies at your disposal without having to pay for costly forensic retrieval services or simply write it off forever. There are various software offerings that can restore data your operating system can’t find. We’ve tested a few of the top data-recovery programs and found the best option for retrieving missing files.

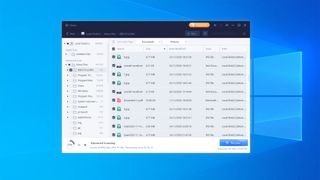

EaseUS Data Recovery Wizard

EaseUS offers a free version of its software, but it's extremely limited. There's a trial version of its paid-for tier that lets you to run a scan and preview the files found, but you can't restore them. There is a freeware version with a claimed 2GB cap, which sounds great. However, this is too good to be true, as the actual base limit is 500MB. You can unlock the remaining 1.5GB by promoting the product on your social media accounts.

Despite this, if you don't mind the price, this is a robust data recovery solution. Similar to many of EaseUS' products, this Data Recovery Wizard benefits from a well-designed and modern user interface, combing a decent blend of simplicity and functionality. It is quick and painless to start a scan and, even though the console is clean and uncluttered, you can find advanced filtering options a few clicks away if you need them.

Price: £84.95 a year

Lazesoft Data Recovery

Lazesoft immediately impresses as it is one of the few data recovery tools on this list that offers a genuinely useful free tier. Most free data recovery tools limit the user to a certain (usually fairly small) maximum quantity of data that can be restored, Lazesoft Data Recovery Free provides almost all of its features right off the bat. It only gates the support for Windows Server and bootable recovery media.

Although the interface is a little dated, with what some may call a Windows Vista-esque appearance, the functionality is definitely solid. It can get a little frustrating when using the filtration options to identify and recover specific types of data, and unfortunately, the full drive scans aren't as quick as some others you'll find on this list, but it's definitely a well-rounded system that delivers on its promise.

Price: Free

Minitool Power Data Recovery

Minitool's user interface pitches the right balance between an uncluttered, clear layout, and one that still impresses with a solid amount of flexibility and technical depth. We were great fans of the detailed file-explorer window for previewing recovered data, complete with indicators for whether specific files are lost or deleted.

Scans take a little longer with Minitool than with some other software, but that’s paid off by the depth of information that they return, including dates when files were last modified. Unlike other entries in this list, Minitool offers a lifetime plan for personal users, in addition to monthly and annual subscriptions, which may offer better value if you’re looking for a long-term solution.

Price:£90/year

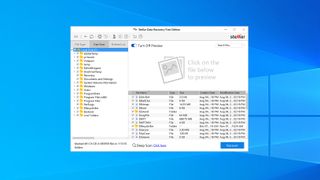

Stellar Data Recovery Wizard

Stellar Data Recovery Wizard is among the better-looking tools in this category, with a clean, modern interface, but it suffers a little from being over-simplified. The default options are all large and clearly labeled, but you’ll have to dig a little deeper for more granular options; as an example, the scan process doesn’t allow you to preview recovered files until you toggle an optional slider, while systems like Minitool display that info automatically.

Like the other paid-for options on this list, Stellar offers a free version of its software allowing users to recover 1GB of data without paying, but be warned – you can only recover files that are under 25MB in size. Bumping up to a paid subscription will remove these restrictions, although only the ‘Professional’ tier and above are able to recover files from lost partitions.

Price: $89.99/year

UnDeleteMyFiles Pro

Like Lazesoft Data Recovery, UnDeleteMyFiles Pro is a genuinely free app with no strings attached, and despite its mid-2000s aesthetic, it’s a perfectly capable file recovery tool. All the functionality you’ll need is here, although the layout – with individual names and icons for different tasks – can be slightly confusing compared to more menu-driven rivals.

Get your head around the UI, though, and you’ll find a tool that offers fast and reliable recovery operations. Advanced filtration isn’t as intuitive a process as with some other tools on this list, but it does the job, and it even includes some more niche tools including email recovery and a forensic file deletion tool.

Price: Free

Please note while this round-up was accurate at the time of publication, prices have since been updated to reflect the standard pricing for these tools as of December 2022. We will update our listing for 2023 in due course.

Get the ITPro. daily newsletter

Receive our latest news, industry updates, featured resources and more. Sign up today to receive our FREE report on AI cyber crime & security - newly updated for 2024.

Adam Shepherd has been a technology journalist since 2015, covering everything from cloud storage and security, to smartphones and servers. Over the course of his career, he’s seen the spread of 5G, the growing ubiquity of wireless devices, and the start of the connected revolution. He’s also been to more trade shows and technology conferences than he cares to count.

Adam is an avid follower of the latest hardware innovations, and he is never happier than when tinkering with complex network configurations, or exploring a new Linux distro. He was also previously a co-host on the ITPro Podcast, where he was often found ranting about his love of strange gadgets, his disdain for Windows Mobile, and everything in between.

You can find Adam tweeting about enterprise technology (or more often bad jokes) @AdamShepherUK.