Best IDEs: The perfect code editors for beginners and professionals

Level up your programming with these top integrated development environments

Using something every day can almost cause you to become immune to its impact. This is very much the case with text editors. Serving as a core utility for development teams of all sizes and disciplines, their capabilities can easily go unnoticed when things are going well. Similarly, if you're in the position of having to augment your development environment every couple of projects, chances are you haven't found the right editor for you.

Just as there's no such thing as a 'typical developer', there really isn't a one-size-fits-all solution for your development environment. In the following run-down, we'll take a look at the most commonly used text editors out there on the market and break down their strengths, weaknesses, and why they might - or as mentioned, might not - be right for you.

Atom

Who it is for: Best for front-end and senior developers

Pros

Cons

- Highly customisable

- Excellent GitHub integration

- Completely free with an active community

- Takes a while to configure

- Uses a lot of RAM

Calling itself a 'hackable text editor for the 21st century', Atom is an open-source editor originally developed by GitHub. Made with developers in mind, this design intention permeates through the product in a lot of its core capabilities. This motive is most apparent when you encounter its in-built package manager, which installs its proprietary GitHub package by default and serves as, by far, one of the most user-friendly ways to manage git inside your development environment - second only to GitHub Desktop. But what you trade off in terms of complexity, you more than gain back when it comes to additional functionality. Case in point, the latest release lets you review pull requests and add comments from right within the IDE. Any organisation out there working to make pull request reviews a more enjoyable experience deserves recognition.

Atom's simplified UI, customisable nature and non-existent price tag are exactly what make it so popular among developers. Its one key drawback is its performance. The editor can often be slow to start with and uses up large amounts of memory. Combine this factor with habitual use of notoriously RAM-hungry Chrome, and you're likely going to encounter repeated crashes and real interruptions to your workflows unless you're running everything from a super powerful rig.

One of the ultimate key differentiators with Atom is its community. The team behind Atom really put a lot of care into each of their releases. It’s designed with thought, constructed with attention to detail and as long as you’re not running too many programs at once, or relying too heavily on the app’s search or grep function, it can be a real joy to use.

VSCode

Who it is for: Best for web developers and juniors

Pros

Cons

- Accessible on-boarding flow

- Extensive range of plugins

- Completely free and heavily documented

- Autocomplete recommendations can often be unhelpful

- Python tooling could be better

VSCode is an excellent choice for your day-to-day setup. The versatility of Microsoft's completely free editor is hard to match. While the python tooling leaves a lot to be desired, for your run-of-the-mill JAMstack application, most of what you need should work on startup without any need for complex configuration. The IDE itself is designed to be lightweight; having been initially developed as a browser-based code editor, it only requires you to have a minimum of 1GB of RAM and 200MB of disk space to get up and running.

Bolting on additional extensions is such a seamless process that it's actually quite enjoyable. That being said, it's very easy to get click-happy on the VSCode Marketplace and find yourself adding a whole suite of features that you don't actually need. More often than not, if you find that VSCode is eating up a lot of your CPU, it's likely down to the combination of extensions that you're running.

If you tend to prefer a more ‘hands on’ approach when familiarising yourself with a new editor rather than poring through documentation, then you'll probably enjoy VSCode's handy Interactive Playground. Serving as a practical walk-through, the Interactive Playground is sprinkled with fully functional code snippets designed to help you experience some of the program's best features in a hands-on way.

But by far, one of the best features of the editor is its built-in command-line interface (CLI) allowing you to run and open files in VSCode right from your terminal. You can install the shortcut by simply bringing up the editor’s ‘Spotlight Search’-style Command Palette, start typing ‘shell command’ and you’ll be met with the option to “Install ‘code’ command in PATH”. Just like that, you’ll be able to navigate in and out of your directories through your terminal and enter the command ‘code’ to instantly bring up your editor in the correct workspace, ready for you to jump in and make any changes you need to. Truly satisfying.

JetBrains Suite

Who it is for: Best for back-end developers and students

Pros

Cons

- Great tooling without the need for additional extensions

- Genuinely helpful autocomplete recommendations

- Excellent debugger

- Expensive on both a team and individual license

- Prone to performance issues

If you've worked as a back-end developer within a commercial setting, then you've likely come into contact with the JetBrains suite of apps before. While the company itself creates a whole host of software development tools and applications, out of its ten IDEs, they're best known for IntelliJ, PhpStorm and PyCharm, each catering to the development of Java, PHP and Python applications, respectively.

One thing you'll immediately notice about JetBrains IDEs is their specific language focus. While this narrow focus is particularly restrictive if you're yet to find your programming niche, it pays dividends when it comes to debugging, running tests and inspecting unfamiliar code. The documentation and online reviews honestly don't do justice to the sheer impact these IDE's most useful features can have on your workflows. The auto-complete quick fixes and error-highlighting can assist you in producing noticeably better work at a much faster rate. While the application itself is rather bulky and uses up a lot of memory, if you're working with an especially gritty, complex codebase in relative solitude, then it could be worth making the switch.

All of this built-in functionality comes at a price beyond eating up your CPU. Depending on which of the range you opt for, you're looking at paying £69 for your first year of usage, with that price point going up to £119 if you choose to get fully kitted out with the complete IntelliJ toolset. Accompanied by the steep price tag is a particularly steep learning curve. It doesn't take long to get used to the array of shortcuts that you'll need to memorise, but the JetBrains IDEs are nowhere near as accessible for beginners as many of the free-to-use alternatives, and given the hefty price tag, you're likely to feel a bit short-changed if you're not putting it to use on a really bulky project.

Xcode

Who it is for: Best for developers of apps in the Apple ecosystem

Pros

Cons

- Official Apple support

- Extensive documentation

- Multi-platform development

- Free

- Can only run on a Mac

- Useful for certain applications, not a full-purpose IDE

Apple’s own programming language, Swift, was originally built to replace Objective-C, which hadn’t been significantly iterated on since the 1980s. It has also been growing in popularity among the coding community, according to the TIOBE index, in which Swift is now ranked in the top 10 most in-demand languages.

In terms of determining the best IDE for Swift, there are a number of options available to developers. But Apple recommends Xcode - a free application available on the Mac App Store and the natural choice for anyone with an interest in developing for the Apple ecosystem. In addition to Swift, Xcode supports development in an array of popular languages such as C, C++, Objective-C, Java, Python, Ruby, and more.

One of the standout features of Xcode is its Playgrounds feature; it allows developers to open a special type of Swift file that allows code to be rendered in real time. Originally created by Apple to showcase Swift tutorials, the feature is especially useful for beginners learning the basics, or experienced developers prototyping a new feature away from the main app’s codebase.

Developers have access to built-in simulators for most Apple products, making it easy to see how their apps appear on different devices, such as Apple Watch, Macs, iPhones, iPads, and Apple TV. Not to mention the strong collaboration features for teams who want to leave comments on portions of code, review code, or merge pull requests to the likes of GitHub directly from within Xcode as part of the latest Xcode 13 update.

Sublime

Who it is for: Best for quick edits and setting as your default application

Pros

Cons

- Unbeatable in terms of speed

- Great for concurrent editing on multiple windows

- Minimal, un-cluttered user interface

- No obvious on-boarding flow

- Free initially but charges a fee for continual use

If you're looking for a premium product that won't weigh down your machine, then Sublime might serve as an ideal fit. Originally developed not only as a code editor but an overall text editor, Sublime is perfect if you like working with pseudo code as the bones of your project. You'll be able to kick things off with mark-up and not have to alter your configuration to avoid a bombardment of red squiggly lines.

One of the first things you'll notice when using Sublime is how lightweight it is in every sense of the term. Clocking up a featherweight file size of 22MB, it loads pretty much instantly, is incredibly fast and legendarily stable. One of the very few drawbacks for beginners is the noticeably lightweight on-boarding flow. This even extends to the syntax highlighting which comes installed in plain-text mode, making Sublime one of the few editors that will let you paste in a large JSON object without automatically suggesting irrelevant corrections or underlining large sections of your screen in red wavy lines. This makes it an ideal choice for your default app if you've got to spend a stretch of time editing CSV, JSON or YAML files.

One thing to note is when you open Sublime, you won't be met with a welcome screen or guided towards a navigation pane. The programme very much assumes that you know what you're doing from the get-go. While this might seem a bit intimidating, the program's far more intuitive than anything from JetBrains and won't require you to input the Konami code to bring up the terminal. You might need to install an external package or two, though.

Rather than delivering a whole suite of comprehensive features, arming you with an artillery of all the tools you might need to cut a behemoth of a codebase down to size, Sublime delivers a few important things really well. It's built with purpose and performance in mind. The search function is excellent. It's case-sensitive, picks up regular expressions (or RegEx), supports multiple cursors for big find-and-replace jobs and is lightning-fast - the same goes for its Goto Anything file navigation system. It's just genuinely enjoyable to use. But unfortunately, it's not actually free. While you can get up and running with Sublime for nothing and ‘evaluate’ the software for free at your leisure, Sublime HQ requires you to purchase a full license for what it deems continued use, hitting you with frequent prompts to pay the $80 fee every few times you hit save.

Vim

Who it is for: Best for Linux users and network security pros

Pros

Cons

- Free, open-source and available on almost all operating systems

- Ships with a tutorial

- Passionate online community

- Hard to master

- Little to no user-guidance

Vim is one of the most famous text editors in existence. Depending on your operating system of choice and programming experience, you’ll have likely heard of Vim within the context of the great Editor War - a longstanding rivalry that divides the open-source software community based upon their preference between the classic editors, Vim and emacs. Think Marvel versus DC, but somehow even nerdier. For the purposes of this article, we’re going to sidestep that particular argument, and assess Vim purely as an alternative to more recent IDEs.

It's completely free, enjoys almost universal support, and what's more, its 30-year tenure has earned it a passionate fan base along with a variety of well-maintained open-source plugins. Its ubiquity extends to the command line - meaning that you can simply open up your terminal, type in the command 'vi' on any non-Windows machine, and bam, you're there. But the problem is that unless you know what you’re doing, you most likely won't be able to get out again (note: if this has happened to you while reading this, type the command ':q!' to escape from this hellscape).

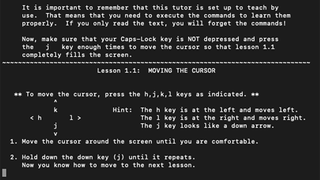

In spite of its pervasiveness, Vim is well-known for its relative difficulty to pick up. Even by its own admission, the official documentation sums it up nicely by issuing the following warning to new users:

"Vim isn't an editor designed to hold its users' hands. It is a tool, the use of which must be learned."

So why use Vim at all if it's so demanding and complex? Well, to put it simply, learning to use Vim will undoubtedly make you better at writing code. The process of learning how to use Vim almost serves as a microcosm of understanding a new programming language, in that before you start building anything, you've got to understand the fundamentals of its different states, core commands and the principles of navigation. The program relies heavily on keyboard shortcuts - so much so that if you've ever spent a significant stretch of time playing video games using your keyboard, Vim will likely come quite easy to you at first.

We can't wholeheartedly recommend Vim as an alternative to your current IDE, as it's very likely to rob you of both your sanity and hours of your precious time on your first foray, all before you even access your files. However, if you're looking to level up your skills as an engineer and gain a more meaningful understanding of a variety of core computing concepts, then don't be deterred. Venture into Vim. Remember, if all else fails, ':help quit'.

Get the ITPro. daily newsletter

Receive our latest news, industry updates, featured resources and more. Sign up today to receive our FREE report on AI cyber crime & security - newly updated for 2024.