Gmail vs Outlook: Which one is better for business?

Gmail vs Outlook - a long-running question over which is the best for business and productivity. We compared the two biggest email services to find out

The question of whether to use Gmail vs Outlook is a recurring theme for business users given the popularity of both services. They're both easily accessible through a variety of means either through their own dedicated apps or via the web at mail.google.com and outlook.com respectively.

While the likes of Slack, Zoom, and Microsoft Teams would rather we stopped sending emails entirely, legacy messaging systems have proven time and again to be future-proof. And despite the myriad instant messaging services currently available to us, the fact remains that email is still king.

A key factor in email's enduring popularity might well be the need to have an email address to sign up to services like Slack and Zoom, making it something of a foundational service for modern life.

And there are two main contenders which meet our needs in this regard, prompting users to frequently ponder which is best suited to their individual circumstances.

Gmail vs Outlook: A history of both services

Gmail launched on April Fool's Day in 2004, but it certainly was no joke. Gmail quickly became the main challenger to Microsoft's established Outlook service. The web version of Outlook actually started life as 'Hotmail' in 1996, and was changed to MSN Hotmail, then Windows Live Hotmail before finally adopting the Outlook branding used by Microsoft's business email client in 2013.

These days, Outlook caters for business and professional users, offering a range of services matching that of Gmail, while the Mail app is a simplified app designed for the everyday user.

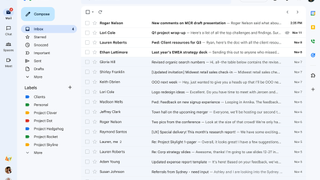

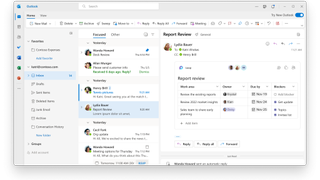

Gmail vs Outlook: Interface

Being the two biggest players in the email space naturally means we're talking about a clean pair of interfaces.

Outlook takes its cue from the Windows Modern UI style, also known as the Metro user interface, while Gmail was, until recently, considered the "uglier" of the two.

This changed in 2018 when a comprehensive redesign saw its aesthetics and user experience vastly improve - looking far more in line with Outlook.

Gmail had previously prioritised simplicity, although some would describe this as plain. The redesign improved on this simplistic feel while retaining the ability for users to concentrate on emails themselves rather than face any distractions.

The reading pane on Outlook is switched off by default, but can be enabled quite simply. Gmail also features a reading pane, which can be accessed through the settings menu.

Gmail vs Outlook: Folders, labels, and searches

One of the most significant things Google did to differentiate Gmail from other email services was to forgo folders in place of labels. This meant that messages can be tagged with specific labels depending on your organisational system, with individual emails capable of carrying multiple labels.

The decision to support labels instead of folders remains a rather controversial topic for some users. However, this does offer an advantage in that emails can be organised into several places at once without the need for messy duplicates.

If Gmail is set up through a traditional email client, labels will appear as folders, and emails with multiple labels will appear in multiple folders.

Outlook does have some good organisational features and includes a traditional folder structure, which does make it an easier experience for some users. The service also has a system of categories. These differ from labels in that they are tags for messages rather than pseudo-folders.

You can apply more than one category to a message, but this will not affect its place in folders. Outlook also automatically tags certain messages with categories such as Documents, Photos, Newsletters, etc. These are known as Quick View folders.

Search is probably why Gmail exists at all. It was part of its raison d'etre when first conceived, the convenience of an email service that could be searched as easily as one would use a search engine. Simply typing search terms into the search bar at the top of the webpage should unearth what you're looking for.

Users can employ more advanced methods by typing in shortcuts such as "from:" or "to:". Additionally, Gmail is reasonably powerful at deciphering typos, able to deliver messages related to or closely resembling search terms rather than taking purely what it is given by a user.

Meanwhile, Outlook boasts similar search facilities with advanced methods of finding the right information quickly. The Quick View folders enable users to instantly search through messages for the last few emails containing photos, for example.

But while Outlook's search function is good enough to do the job, it's nothing exciting, and nowhere near as polished as Gmail.

Gmail vs Outlook: Connectivity

Gmail supports both POP and IMAP, meaning that pretty much any email client on most operating systems will work off the bat with the email service. Even Microsoft Outlook will play nicely with it.

Outlook also supports POP and IMAP, and although in the past it has faced claims of connectivity issues. Nonetheless, it generally handles both well. Outlook also supports Microsoft ActiveSync, which is used to synchronise an Exchange mailbox with a mobile device.

Gmail vs Outlook: Storage and attachment limits

Gmail offers 15GB of storage for free but this counts across Gmail and Google Drive, However, users who choose to upgrade to Google One can expand their total storage space to 100GB or more.

Microsoft also offers free users 15GB of storage per Outlook account, though this can be increased to 50GB if you have an active Microsoft365 subscription on your account.

Outlook users looking to send large files by email might find themselves disappointed, as the service has a 20MB combined attachment limit. For anything larger than this, Microsoft recommends uploading the files to a cloud storage service such as OneDrive and then sharing the link to your files in the email instead.

Gmail also limits the size of attachments to 25MB in size. In addition, the Gmail app only allows you to upload one image at a time. The images are displayed as thumbnails, however, which allows you to check that you've sent the right files and see what you've received before you download it.

Gmail will also detect if you've mentioned attachments in the text of an email but not actually attached anything, and check if you've forgotten to include it before you hit send - which is a useful feature.

If one were to attempt to upload anything larger than 25MB, Gmail will automatically prompt you with an option to upload the file to your Google Drive and then attach the files as a shared drive link to your recipient. In this way, it can be considered a more seamless file-sharing experience than the stop-start route Microsoft has taken with Outlook.

Gmail vs Outlook: Extensions and integrations

Gmail

Being part of the Google Workspace, Gmail is tightly integrated with a range of productivity tools, making it incredibly useful as a workplace tool – beyond just an email app. This integration is also seamless, meaning you can open attachments and other media with tools like Google Docs and Google Sheets.

It’s because of this seamless experience that you’re able to work on files immediately inside Gmail before they are automatically saved to Google Drive – and because you don’t ever have to leave the Google ecosystem, you don’t need to worry about third-party applications potentially changing the file extensions of your documents.

Third-party applications are supported, however, and allow for integrations with various customer relationship management tools, as an example. There are a whole range of ISVs that have tools on Google’s Apps marketplace, which will all play nicely with Gmail.

This third-party integration even extends to social media platforms, and it’s possible to import your contacts and social network from these sites directly to Gmail’s address book.

Gmail’s built-in language tools are also a highlight. For those that encounter messages composed in languages other than English, Google Translate is on hand to quickly translate the contents of the message, with a single button. This is a simple function that is missing from Outlook.

Outlook

Outlook handles most of its extensions and integrations through its OneDrive and Microsoft 365 app family. Regardless of the platform or device you are using, Outlook’s integration experience is largely the same across the board.

Unfortunately, some of the add-ons don’t apply to specific kinds of devices or messages, but thankfully the extensions are supported in Outlook on the web in Office 365, Outlook 2013, and even in Outlook 2016 for Mac.

Despite this, you can easily access OneNote Online, PowerPoint, Excel, and Word through Outlook, as well as something called the Sway tool which helps users to put together multimedia stories and is highly acclaimed.

You can install an add-in by opening the Office Store from within Outlook, or by going to Microsoft AppSource.

Gmail vs Outlook: Spam, filters, and email management

Gmail’s spam filtering function works effectively. And while the occasional spam email does make its way through the filter, you can rest assured knowing that false positives are basically non-existent. Gmail is also useful for filtering out emails that you don’t want which aren’t categorised as spam.

Gmail does match ads with email content, which bothers some users, but these ads are usually filtered into the social or promotions tabs and don't interfere with inbox use. It also offers quite useful tools to prevent you accidentally deleting emails that you need.

Outlook's email and spam filtering is less sophisticated (much of it has to be done manually), but has some features that put it ahead of Gmail. One such feature is Sweep.

Sweep can be used to quickly delete unwanted emails in your inbox, and gives you options to automatically delete all incoming emails from a particular sender, to keep only the latest email, or to delete emails older than 10 days.

Is Gmail better than Outlook?

Both Gmail and Outlook are sophisticated email platforms that have gone through many years of refinement. As such, they are both impressive tools that are neatly integrated into their respective ecosystems. Therefore, this mostly a matter of user preference.

Neither service offers one standout feature that puts it ahead of the other, and both offer a somewhat similar user experience, albeit with a different menu structure.

Gmail’s search function may be slightly easier to use than Outlook’s, and we find Gmail’s user interface more appealing, but there isn’t anything here to justify switching. Both Outlook and Gmail are deeply integrated in their respective ecosystems, and so both will serve you well.

Get the ITPro. daily newsletter

Receive our latest news, industry updates, featured resources and more. Sign up today to receive our FREE report on AI cyber crime & security - newly updated for 2024.

Clare is the founder of Blue Cactus Digital, a digital marketing company that helps ethical and sustainability-focused businesses grow their customer base.

Prior to becoming a marketer, Clare was a journalist, working at a range of mobile device-focused outlets including Know Your Mobile before moving into freelance life.

As a freelance writer, she drew on her expertise in mobility to write features and guides for ITPro, as well as regularly writing news stories on a wide range of topics.