Best service management software

We round up the best software choices to help you manage your business more effectively

Service management software, such as order management, hardware and software maintenance, diagnostics and troubleshooting, helps businesses provide their products to end-user.

With such a variety of service management software to choose from, what's important is finding the right one your organisation, whether you're employing field service engineers to enter homes or businesses to fix hardware or systems, or you need to provide services remotely, from the office.

Here is a selection of some of the best service management software available now.

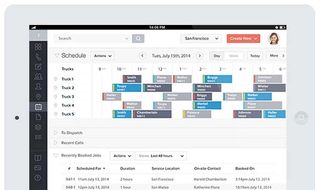

Vonigo

Vonigo is an end-to-end service management tool that helps businesses manage their workforce, wherever they may be. It's a modular platform, so you can mix and match the different services you need, such as scheduling, online booking, work order management, estimating, dispatch, routing, GPS, CRM, invoicing, payments and reporting.

Customers can get real-time updates about their request and with an online booking service, it really does ensure customer service is as effective as it possibly can be.

If you're unsure what you need, Vonigo's onboarding process is smooth to ensure you're using everything you possibly can to make your life easier.

Price: $75/user/month

Get the ITPro. daily newsletter

Receive our latest news, industry updates, featured resources and more. Sign up today to receive our FREE report on AI cyber crime & security - newly updated for 2024.

Platforms: Web, iOS

URL: Vonigo

Protean

As well as helping you schedule your staff's tasks, manage equipment and parts to ensure you have the right parts at the right time to fully service your customers with as little disruption as possible.

Staff collaboration is fully supported too, allowing both in-office and field staff to communicate with each other, seamlessly servicing customers.

Protean will also manage the customer lifecycle for you too, including the initial sale, service orders and invoicing to ensure your accounts are always up to date.

Price: 50/user/month

Platforms: Windows, Android

URL: Protean

UpKeep

If you work in the maintenance or asset management industry, UpKeep is the perfect software to keep track of jobs, assets and staff to ensure everything moves like clockwork.

The platform puts mobile first, with apps available on iOS and Android as well as desktop, so technicians, engineers and remote workers can always stay up to date with what's happening in the office.

It allows you to create work orders wherever you are, get notifications when jobs are updated, and if any of your assets go down you'll be notified so you can take immediate action to minimise disruption across the board.

Price: $24.99/user/month

Platforms: Web, iOS, Android

URL: UpKeep

Apptivo Work Orders

Apptivo's Work Orders app is just one of the products it offers the enterprise. If you're already using Apptivo's suite of apps to power your business, Work Orders is the easy option for integrating job planning too.

You can create work orders on the go using its web-based app, a customer can instantly accept or reject it and then staff can be immediately dispatched to the destination. When the job's complete, you can invoice straight from the app for a quick turnaround.

For tracking employees, you can make use of the easy to understand calendar built into Apptivo, documenting exactly where your staff is at any one time.

Price: From free

Platforms: Web

URL: Apptivo Work Orders

Housecall Pro

Housecall Pro, as the name would suggest, has been designed for businesses that need people to enter homes, such as service engineers, plumbers, electricians, healthcare workers and more.

Not only does it allow businesses to schedule and dispatch the right person to deal with the job, it also includes a live chat functionality so the field worker can communicate with the customer or other members of staff if needed.

Another added bonus is the ability to track exactly where your employees are at any time, while invoicing and payments straight from the app can keep your cashflow in order.

Price: $39/user/month

Platforms: Web, iOS

URL: Housecall

Clare is the founder of Blue Cactus Digital, a digital marketing company that helps ethical and sustainability-focused businesses grow their customer base.

Prior to becoming a marketer, Clare was a journalist, working at a range of mobile device-focused outlets including Know Your Mobile before moving into freelance life.

As a freelance writer, she drew on her expertise in mobility to write features and guides for ITPro, as well as regularly writing news stories on a wide range of topics.