How to encrypt files and folders in Windows 10

Here’s how to make your sensitive data unreadable to prying eyes

It's safe to assume that you will, at some point, be hacked. However, if you're using Microsoft's popular operating system, there is a way to encrypt files and folders in Windows 10 – rendering it useless to anyone that does get hold of it.

By using encryption, users can convert their sensitive data into a code of jumbled numbers, which can lower the risk of infiltration, theft and subsequent fraud.

Windows 10's built in encryption tool is relatively easy to use and. once configured, will place a lock symbol on the file or folder - signifying that a password is required to access its contents.

Disclaimer

There are a few points to remember here. Firstly, an encrypted file can lose its encryption when transmitted via a network or email. You need to extract the contents of a compressed file or folder before you encrypt it. The tool doesn't necessarily protect files from being deleted and you should always backup encrypted data and store it offline.

How to encrypt files and folders in Windows 10

There are a number of ways to encrypt files and folders in Windows 10. For the purpose of this guide, we will be covering the following tools:

- Windows encrypted file system (EFS)

- BitLocker

Encrypt files and folders using Windows encrypted file system

Microsoft’s EFS service offers support for encrypting individual files, folders, and directories in Windows 10 or any other Windows version since XP. To enable EFS encryption, follow these steps:

- Right-click on the file or folder you want to encrypt and select “Properties”.

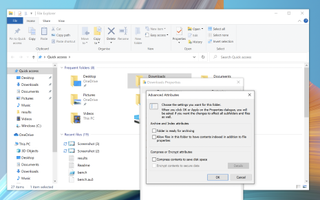

- In the “General” tab of “Properties,” click on the “Advanced” button.

- In the “Advanced Attributes” dialogue box, under “Compress or Encrypt Attributes” section, checkmark on “Encrypt contents to secure data”.

- Click “OK”.

- Click “Apply”.

- If encrypting a folder, a window will pop up asking you to choose between “Apply change to this folder only” and “Apply changes to this folder, subfolders and files.” Select your preference and click “OK” to save the change(s).

How to back up EFS encryption keys

The encryption process is now complete, and Windows will automatically create an encryption key and save it locally to your PC. Files and folders you've encrypted with EFS will feature a small padlock icon in the top-right corner of the thumbnail. Only you can access the encrypted files or folders. But there’s more to it.

To avoid file loss if the key gets corrupted, Windows will prompt you to backup the encryption key immediately after encryption. Backup your EFS encryption key with the following steps:

- In the “Backup your file encryption certificate and key” prompt, choose “Backup now”.

- Ensure you have a USB flash drive plugged into your PC.

- Click “Next” to create your encryption certificate.

- Check on “.PFX” file format to export your certificate file and click “Next”.

- Check the “Password” box to enter a new password.

- Navigate to your USB drive.

- Name to your encryption backup file and click “Save”.

- Click “Next”.

- Click “Finish”.

How to decrypt folders encrypted with EFS

Decrypting the encrypted file/folder is just as easy with the following steps:

- Right-click on the file or folder you want to decrypt and select “Properties”.

- In the “General” tab of “Properties,” click on the “Advanced” button.

- In the “Advanced Attributes” dialogue box, under “Compress or Encrypt Attributes” section, uncheck “Encrypt contents to secure data” option.

- Click “OK”.

- Click “Apply”.

Note: The PC owner can access an EFS-encrypted file locally, but the files will remain inaccessible for all other user accounts. You may also use a DVD or portable hard disk to backup your encryption key.

Disclaimer

The next section will cover BitLocker – a full-disk encryption solution that enables you to encrypt an entire hard drive at once. When combined with a PC’s trusted platform module (TPM), BitLocker can provide advanced security features, including hardware-level encryption. Your computer needs a TPM chip version of 1.2 or later to support BitLocker.

To check if your computer has a TPM chip: Press the Windows key + X, click Device Manager, then Security Devices. Look for the 'Trusted Platform Module' drop down and open it up to see the version number.

How to set up BitLocker on Windows 10

To set up BitLocker on your Windows 10 PC, using the following steps:

- Press Windows key + X keyboard shortcut to open the “Power User” menu.

- Go to “Control Panel” > “System and Security” > “BitLocker Drive Encryption”.

- Under the “BitLocker Drive Encryption” section, click on “Turn on BitLocker”.

- Set a password and click “Next”.

Like EFS-based encryption, you now have a few options to save a recovery key to regain access to your files if you lose or forget your password. Here’s is a list of options available:

- Save to your Microsoft account

- Save to a USB flash drive

- Save to a file

- Print the recovery

How to set a BitLocker recovery key on Windows 10

- Select one of the four options above and click “Next".

- Choose how much of the drive you want to encrypt – the entire drive or only the used disk space.

- Choose either new encryption mode (best for fixed drives on your device) or compatible mode (best for detached drives you can remove from your device).

- Click "Next".

- Check the “Run BitLocker system check” option.

- Click “Continue”.

- Restart your computer.

Upon reboot, BitLocker will prompt you to enter your encryption password to unlock the drive. Type the password and press “Enter.” You can verify BitLocker is turned on by looking for a padlock icon on your encrypted drive’s thumbnail.

Get the ITPro. daily newsletter

Receive our latest news, industry updates, featured resources and more. Sign up today to receive our FREE report on AI cyber crime & security - newly updated for 2024.

How to disable BitLocker on Windows 10

- Open File Explorer.

- Right click the encrypted drive.

- Select "Manage BitLocker".

- Choose to either suspend or disable BitLocker for each encrypted partition.

Disclaimer

BitLocker doesn’t support dynamic disc encryption. Decryption may take a while, depending on the size of your encrypted drive. However, you can continue using your computer during the encryption.

A security system is only as strong as its weakest point, which is why it helps to take small but decisive steps toward data encryption.

BitLocker can protect PCs’ operating systems against offline attacks, and EFS offers additional file-level encryption for security separation between multiple users of the same computer. You can also combine protections by choosing to use EFS to encrypt files on a BitLocker-protected drive.

Dale Walker is the Managing Editor of ITPro, and its sibling sites CloudPro and ChannelPro. Dale has a keen interest in IT regulations, data protection, and cyber security. He spent a number of years reporting for ITPro from numerous domestic and international events, including IBM, Red Hat, Google, and has been a regular reporter for Microsoft's various yearly showcases, including Ignite.